Multiobjective Tree-Structured Parzen Estimator (MOTPE): Bayesian Optimization for the Real World

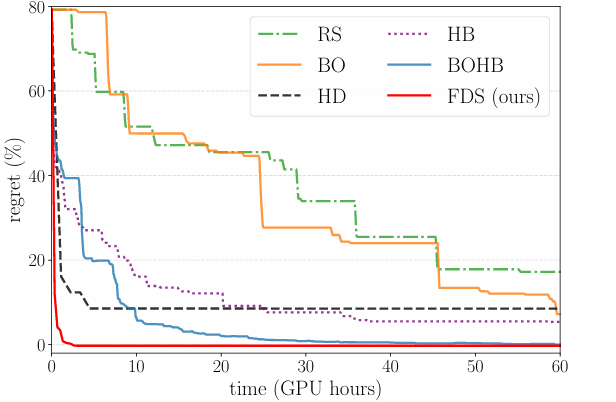

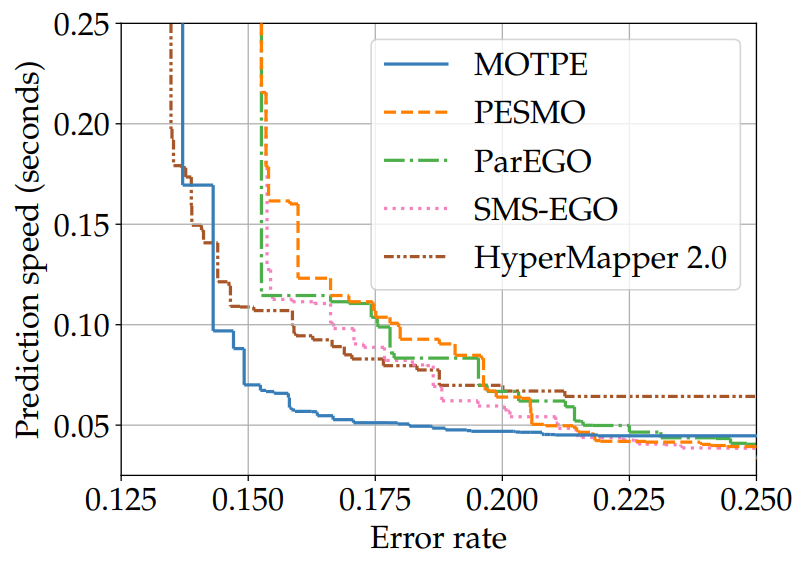

MOTPE extends the Tree-Structured Parzen Estimator (TPE) to multiobjective optimization, handling complex, conditional search spaces with high efficiency. It uses dominance-based splitting and hype...